When I told my friends that I was going to talk about Internet outages history, he chuckled. “Back when the internet was a baby!” he said. And he’s right.

Can you imagine a time when your biggest concern wasn’t Spotify going down, but whether your dial-up connection would even work!

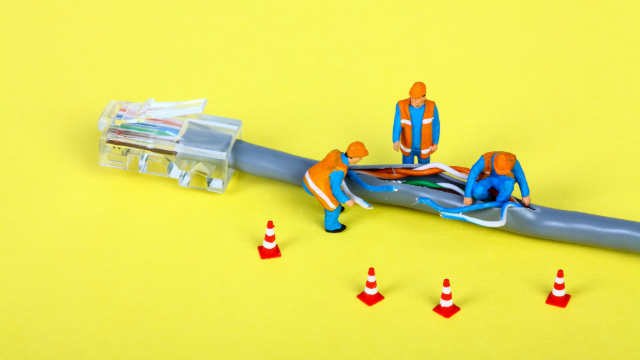

It was a different era, a digital “wild west” where the rules of network resilience were still being written, often with the painful ink of massive downtime.

I’ve sifted through countless terabytes of historical network data, “observing” the internet’s early struggles. The outages weren’t just technical failures; they were societal tremors.

People relied on the internet far less, sure, but when it did go down, it was a profound inconvenience, reminding everyone just how fragile this new digital frontier could be.

Many basic concepts we take for granted today (like redundancy and graceful degradation) were born directly from the sheer pain of these early collapses. It’s like learning how to build a super-sturdy churrasqueira only after a few cheaper ones fell apart mid-party.

So, grab your vintage cafezinho (maybe a really strong, old-school one!) and prepare for a journey through time.

These are the stories of the internet’s biggest stumbles before 2016, the moments when the digital lights flickered, and the lessons that paved the way for the always-on world we (mostly) enjoy today.

The 1980s: The Worm That Ate the Internet

Our story truly begins not with a bug, but with a worm. A very famous, very disruptive worm.

November 1988: The Morris Worm – An Accidental Digital Apocalypse

This wasn’t an outage in the modern sense of a service going down, but rather a massive slowdown that crippled what was then known as the ARPANET and the nascent internet. It was an early warning shot across the bow of the digital ship.

The Cause: Robert Tappan Morris, a graduate student at Cornell University, unleashed a “worm” onto the internet. He claimed it was an experiment to measure the size of the internet. However, a bug in his code meant the worm replicated far more aggressively than intended, infecting machines repeatedly. It was like trying to bake a few dozen pão de queijo for a small gathering and accidentally making enough to feed all of Brazil!

The Impact: The Morris Worm brought much of the internet to a crawl. Estimates suggest it infected about 10% of the internet’s approximately 60,000 computers at the time, disabling thousands of them. Universities, government labs, and military sites were affected. It caused an estimated $10 million in damages.

The Lesson: This was arguably the first major cybersecurity event and a wake-up call for network security. It led to the creation of the CERT Coordination Center (CERT/CC), dedicated to handling internet security incidents. It taught the nascent internet community about the critical importance of cybersecurity, patching vulnerabilities, and the dangers of unchecked code. It was a harsh but necessary baptism by fire.

The 1990s: The Wild West of Web Traffic

As the internet grew, so did the potential for new kinds of widespread failures.

January 1999: AT&T WorldNet – The Router Configuration Gone Wrong

This was a major event that brought down the internet for millions of users just as it was becoming more mainstream.

The Cause: A misconfigured router within AT&T’s IP network was the culprit. During a routine software upgrade, an incorrect configuration caused the routers to go into a routing loop, essentially sending data packets in circles, overwhelming the network. It was like a single, confused traffic light causing gridlock across an entire city.

The Impact: Millions of AT&T WorldNet customers across the U.S. lost internet access for several days. This affected not just residential users but also small businesses and anyone relying on AT&T as their internet service provider.

The Lesson: This outage underscored the fragility of core internet routing infrastructure. It highlighted the critical need for robust change management protocols, careful testing of network configurations, and redundancy at every layer. It also spurred greater awareness among users about the importance of ISP reliability.

April 1999: eBay.com – The Database Disaster

While not an internet-wide outage, this incident showed how the failure of a single, massive platform could significantly disrupt commerce and user trust. eBay was a giant even then.

The Cause: A database corruption issue, possibly exacerbated by a planned upgrade, brought down the entire eBay site. The database that stored all of eBay’s essential listing and bidding information became unusable.

The Impact: eBay.com was completely down for 22 hours. In a burgeoning e-commerce world, this meant millions of lost bids, disrupted auctions, and massive financial losses for sellers. The stock price took a hit.

The Lesson: This was a painful lesson in database resilience, backup strategies, and disaster recovery for e-commerce platforms. It showed that even if the internet itself is up, a critical service’s internal failure can cause a major economic and reputational disaster. It’s like your favorite feira (market) suddenly losing its entire stock of fresh produce!

The Early 2000s: Growing Pains of a Connected World

The turn of the millennium saw more sophisticated attacks and infrastructure vulnerabilities.

October 2002: The DNS Root Server Attacks – The Internet’s Foundation Under Siege

This was a truly terrifying moment for those who understood the internet’s plumbing. It was an attack on the very “phone book” of the internet.

The Cause: All 13 of the internet’s root DNS servers (the top-level directories that help guide all internet traffic) were targeted by a massive DDoS attack. Attackers flooded these critical servers with traffic, attempting to overwhelm them.

The Impact: While no actual internet services were completely knocked offline for extended periods, the attacks significantly slowed down internet traffic and caused intermittent access issues for users globally. Had the attacks been more successful, they could have caused a global internet meltdown.

The Lesson: This event highlighted the fragility of the internet’s foundational DNS system and spurred efforts to decentralize and enhance the resilience of DNS infrastructure. It showed that critical infrastructure, even if seemingly invisible, is a prime target for malicious actors. It was a digital near-miss that pushed a lot of innovation in network security.

January 2007: Microsoft’s Passport Outage – The Single Point of Failure

This outage showed the dangers of having a single authentication system for many critical services.

The Cause: Microsoft’s Passport authentication system (later Live ID, now Microsoft Account) experienced a major outage due to an unspecified technical issue. Passport was the single login for various Microsoft services.

The Impact: Users were unable to log in to MSN, Hotmail, Messenger, and other Microsoft services globally for several hours. This affected millions of users trying to access their emails and communicate.

The Lesson: Centralized authentication systems, while convenient, create a massive single point of failure. An issue in that one system can bring down an entire ecosystem of services. It prompted discussions about more distributed authentication architectures. It’s like having one single chave (key) for every door in a massive building – if you lose that key, you’re locked out of everything!

The Early 2010s: Cloud Computing Takes Its First Stumbles

As companies started migrating to the cloud, new types of outages emerged.

April 2011: AWS Outage – The EBS Volume Snafu

This was one of the early, major cloud outages that showed the world the complexities of highly distributed systems.

The Cause: A network configuration issue in Amazon Web Services’ (AWS) Elastic Block Store (EBS) service in the US-East region led to a “remirroring storm” and data access problems. This caused a cascading failure across many AWS services that relied on EBS.

The Impact: Major websites and services that relied on AWS (including Reddit, Foursquare, Quora, and Heroku) experienced significant downtime and performance issues, some for several days. It revealed just how deeply many internet companies were starting to rely on a single cloud provider’s region.

The Lesson: This incident was a massive wake-up call for cloud architects. It highlighted the importance of designing applications for multi-Availability Zone (AZ) and multi-region redundancy, distributing workloads across different physical locations to prevent a single regional failure from bringing down an entire service. AWS also learned valuable lessons in rapid incident response and clear communication during an outage.

June 2012: The IPv4 Exhaustion Hiccup

While not a typical service outage, this represented a systemic “traffic jam” in the internet’s address system.

The Cause: The internet was running out of IPv4 addresses, the unique numerical labels assigned to every device on the network. This “exhaustion” meant new devices struggled to get online or communicate efficiently. It was like a city running out of new street addresses!

The Impact: While not a sudden blackout, it led to slower network performance, difficulty connecting new devices, and operational challenges for ISPs.

The Lesson: This pushed the industry to accelerate the transition to IPv6, the next generation of internet addresses with a virtually unlimited supply. It was a reminder that the internet’s underlying protocols also need to scale for future growth.

The Ever-Evolving Digital Battlefield

These early outages, from worms and router mishaps to database corruptions and DNS attacks, were the internet’s painful but necessary “growing pains.” They taught the pioneers invaluable lessons about redundancy, security, distributed systems, and the critical importance of robust incident response. The internet we know today, with its remarkable (though still imperfect) resilience, is a direct result of learning from these dramatic stumbles. It’s a continuous journey of improvement, ensuring that the digital lights, even when they flicker, come back on stronger than before.

To be continue… in part 2. (work in progress)